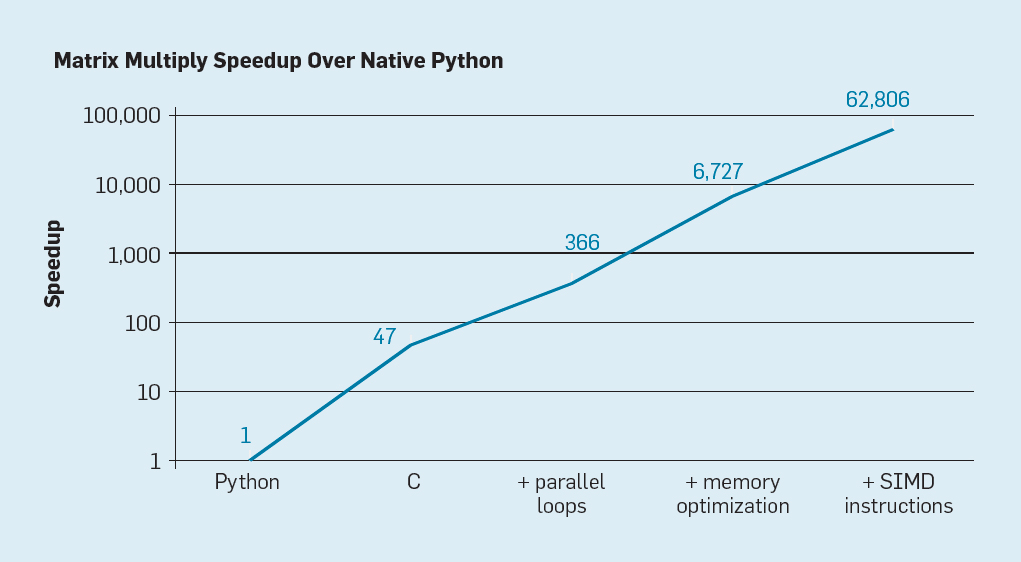

Perhaps you've seen this image presented by the 2017 Turing Award laureates Hennessy and Patterson:

The pioneers of RISC architecture claim that a matrix multiplication loop is nearly 50 times faster with C than with Python. Why is that?

The short answer: C is compiled to native machine code, while Python is interpreted.

Now, what does that mean, and why does that make a difference?

Aside: C can be interpreted, and Python can be compiled (as with the PyPy project). However, for the vast majority of cases, C is compiled and Python is interpreted. Technically speaking, a programming language is just a document containing the specifications the implementations must carry out, and some languages like Haskell has a wide-spread compiler AND an interpreter. Nonetheless, this typically does not apply to C and Python -- they tend to be only compiled and interpreted.

Compilation

Typically, programs in C are compiled. That means, that when we write a simple hello world program:

#include <stdio.h>

int main() {

printf("%s\n", "Hello World!");

return 0;

}and compile it using gcc, we might get something like this:

7f45 4c46 0201 0100 0000 0000 0000 0000

0300 3e00 0100 0000 3005 0000 0000 0000

4000 0000 0000 0000 2819 0000 0000 0000

0000 0000 4000 3800 0900 4000 1d00 1c00

0600 0000 0400 0000 4000 0000 0000 0000

...All these numbers are hexadecimal numbers, and so the first 7f45 is actually 32581, or 111111101000101 in binary.

You may have heard that computers can only read 1's and 0's. With some simplification, it's true -- computers run on electricity, and with our current state of technology, we can only control two states: on, and off. The binary digits control whether electricity flows, or if it doesn't.

So when we compile our code in C, we translate it into a set of binary numbers for our CPU's to execute. They just send the electric signals based off the binary numbers, and it out puts our glorious Hello World program. Compilation, in essence, is translating C syntax to a syntax that our processors can immediately understand. We can understand it as a two-step process:

- Compile source code into a native binary format.

- The CPU executes this native binary.

Interpretation

Then what's interpretation?

If we write a (noticeably shorter) hello world program in Python:

print("Hello World!")all we have to do is to run the python command:

python3 hello_world.pyWe don't run a step of compiling the code, and then executing the binary file. We just run a program, python over our code.

What is this python, then? It's something called an interpreter.

Most Python users use CPython, which means that their Python code is compiled to an intermediate form called bytecode, and then executed by a 6000 line C interpreter program named ceval.c. This interpreter will already be compiled to your native architecture, so in essence, running Python code requires writing and compiling C code to run Python code.

This makes it easy to understand why Python is so much slower than C. To run Python, you need the following steps:

- Transform the code into an intermediate format. (Although this can be done ahead of time)

- The CPU commands the interpreter to execute the transformed code.

- The interpreter executes the transformed code.

- The CPU commands the interpreter to execute the next line of code... repeat steps 2~4 until program exits.

From our steps, we can see that our interpreter acts like another machine in charge of running the Python code. In fact, we do call stages 2~4 a virtual machine, meaning that the ceval.c is a "virtual computer" on top of our CPU. Having an intermediary between the CPU and the code slows things down quite a bit, due to this reason, Python lags behind its counterpart, C.

Then why interpret at all?

The main advantage of interpretation lies in its development speed. Usually, it's much faster to write an implementation in Python than in C, and it's easier to debug thanks to its kind stack traces. A compilation of a multi-million line C project can take hours (giving rise to the term "nightly builds," compiling source code for hours during the night) while running a Python code will be instant.

The Hello World example above may be convoluted, but Python code surely becomes less verbose thanks to dynamic typing. For instance, when we declare a variable in Python, we don't declare its type:

foo = 47while in C we would need to declare the type:

int foo = 47;While this may not seem like a large difference, it allows for far more error-permissive programming, duck typing instead of having to write several explicit boilerplate overloaded functions, and promotes algorithm readability. All of these factors can allow developers to write functioning code in Python in a shorter amount of time than in C, so if execution speed is not important -- Python is a strong choice to make.

Aside: many people claim that Python is a very readable language, and that some mistake it for psuedocode when they first read it. However, personally I dislike dynamic typing because I can't figure out what the objects' types are and have to dissect the code in order to find out return types. If using Python, I strongly suggest you use type hints when you can.

Julia

Some efforts aim to bridge the best of both worlds. One example is the Julia language, whose motto is "walks like python, runs like C". Julia has superbly readable syntax, optional typing, and utilizes Just-in-time compilation (JIT) to execute at nearly native speeds.

However, as the developers first released it in 2012, it lacks a lot of maturity and stability, especially with its packages (libraries). It also tends to be a domain-specific language, focusing on numeric analysis and computational science.

Still, it seems to have a bright future, and I'd be curious to see if it slowly manages the push the statisticians out of R and the physicists out of FORTRAN.

Conclusion

Programming languages, while being purely formal languages, still have their own quirks, and morph just like human languages do. We even have dialects in programming languages(like the Lisp family), and rarely do we ever see a language pop up out of nowhere. Wikipedia even manages an article on the genealogy of programming languages.

Python and C, in that sense, are very two different languages with very different uses. They, too, will evolve over the years and beget offspring, it'll be interesting how their children develop, and whether this article will apply to their descendants as well.